Curl Multiple Files Download Parallels 10

I have to download 2.5k+ files using curl. I'm using Drupals inbuilt batch api to fire the curl script without it timing out but it's taking well over 10 minutes to grab and save the files.Add this in with the the processing of the actual files. The potential runtime of this script is around 30 minutes. Server performance isn't an issue as both the dev/staging and live servers are more than powerful enough.I'm looking for suggestions on how to improve the speed. The overall execution time isn't too big of a deal as this is meant to be run once but it would be nice to know the alternatives.

Parallel Wget

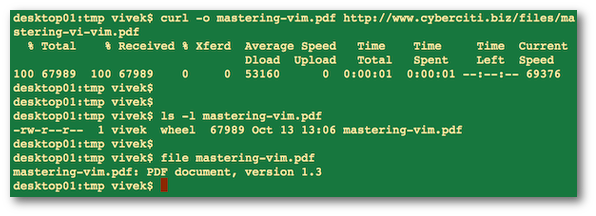

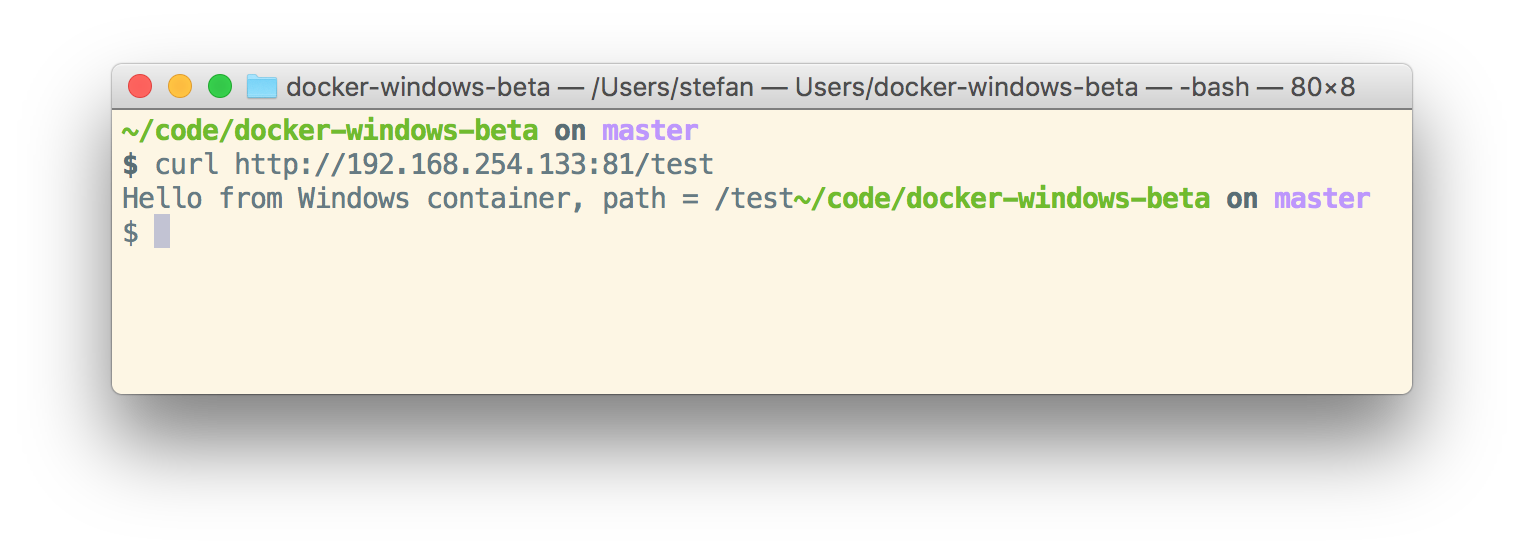

I want to download some pages from a website and I did it successfully using curl but I was wondering if somehow curl downloads multiple pages at a time just like most of the download managers do, it will speed up things a little bit. Is it possible to do it in curl command line utility?The current command I am using is curl '2&1 1.htmlHere I am downloading pages from 1 to 10 and storing them in a file named 1.html.Also, is it possible for curl to write output of each URL to separate file say URL.html, where URL is the actual URL of the page under process. Well, curl is just a simple UNIX process. You can have as many of these curl processes running in parallel and sending their outputs to different files.curl can use the filename part of the URL to generate the local file. Just use the -O option ( man curl for details).You could use something like the following urls='# add more URLs herefor url in $urls; do# run the curl job in the background so we can start another job# and disable the progress bar (-s)echo 'fetching $url'curl $url -O -s &donewait #wait for all background jobs to terminate. For launching of parallel commands, why not use the venerable make command line utility.

It supports parallell execution and dependency tracking and whatnot.How? In the directory where you are downloading the files, create a new file called Makefile with the following contents: # which page numbers to fetchnumbers:= $(shell seq 1 10)# default target which depends on files 1.html. 10.html# (patsubst replaces% with%.html for each number)all: $(patsubst%,%.html,$(numbers))# the rule which tells how to generate a%.html dependency# $@ is the target filename e.g. 1.html%.html:curl -C - '%.html,%,$@) -o $@.tmpmv $@.tmp $@NOTE The last two lines should start with a TAB character (instead of 8 spaces) or make will not accept the file.Now you just run: make -k -j 5The curl command I used will store the output in 1.html.tmp and only if the curl command succeeds then it will be renamed to 1.html (by the mv command on the next line). Thus if some download should fail, you can just re-run the same make command and it will resume/retry downloading the files that failed to download during the first time. Once all files have been successfully downloaded, make will report that there is nothing more to be done, so there is no harm in running it one extra time to be 'safe'.(The -k switch tells make to keep downloading the rest of the files even if one single download should fail.).

New News

- London Vfr Fs2004

- Lightwave 3d Trial Crack Mac N

- Ultra Focus Keygen Download Pc

- Eric Clapton Mtv Unplugged Dvd Full Torrent

- Tajweed Quran In Urdu

- Sew What Pro Serial Numbers

- Vajeh Shenas Farsi Ocr

- The Police Regatta De Blanc Rar Extractor

- Diabolik Lovers Psp English Iso

- Discrete Mathematics For Computing Rod Hagerty Pdf

- Promises Kept Champion Rar

- Hc Stealer Keylogger

- Compact Control Builder Ac800m Download Movies

- Clony Xxl Profiler Download